- Blog

- Nicholis louw numa numa

- Chromebook emulator download ds

- Ragdoll masters pc download

- Lan speed test different reading writing

- Krypton toolkit 4-4 key

- Kylesa discography kat

- Jupyter notebook tutorial making a site

- Nidhogg fire emblem

- Indecent proposal 1993 watch online free

- Brass quintet sheet music grazing in the grass

- Ange idi mulanguthu karuppasamy song mp3

- How to get ezdrummer authorization code

- Myanmar love story book

- Blog

- Nicholis louw numa numa

- Chromebook emulator download ds

- Ragdoll masters pc download

- Lan speed test different reading writing

- Krypton toolkit 4-4 key

- Kylesa discography kat

- Jupyter notebook tutorial making a site

- Nidhogg fire emblem

- Indecent proposal 1993 watch online free

- Brass quintet sheet music grazing in the grass

- Ange idi mulanguthu karuppasamy song mp3

- How to get ezdrummer authorization code

- Myanmar love story book

Summary: IPython HTML widgets for Jupyter (python_example) C:\Users\zhaosong>pip show ipywidgets If you see the below output, that means ipywidgets has been installed.

I want to store the quotes in a CSV file. Much better, isn’t it? Web Scraping Using Python Jupyter Notebooks What does the output look like after adding the processing pipeline item? What is the in the dictionary? What does it do? According to the documentation: “The integer values you assign to classes in this setting determine the order in which they run: items go through from lower valued to higher valued classes.” There is one strange part of the configuration. It is just a part of the custom_settings. As the input of the processor, we get the item produced by the scraper and we must produce output in the same format (for example a dictionary). The proper way of doing processing of the extracted content in Scrapy is using a processing pipeline. I am going to split the quote into lines, select the first one and remove HTML tags. Let’s do it in the most trivial way because it is not a blog post about extracting text from HTML. I don’t want the source of the quote and HTML tags. What do we see in the log output? Things like this: We can extract them using a CSS selector. The content is inside a div with “mw-parser-output” class.

#JUPYTER NOTEBOOK TUTORIAL MAKING A SITE CODE#

Let’s look at the source code of the page. In this example, we are going to extract Marilyn Manson’s quotes from Wikiquote. It consists of two essential parts: start URLs (which is a list of pages to scrape) and the selector (or selectors) to extract the interesting part of a page. In the first step, we need to define a Scrapy Spider. I have not found a solution yet, so let’s assume for now that we can run a CrawlerProcess only once. It is a simple and easy tool to use.įirst of all, we will use Scrapy running in Jupyter Notebook.Unfortunately, there is a problem with running Scrapy multiple times in Jupyter. Most commonly it is used to extract data from HTML or XML documents. As mentioned in their website, beautiful soup can parse anything we give it.

#JUPYTER NOTEBOOK TUTORIAL MAKING A SITE INSTALL#

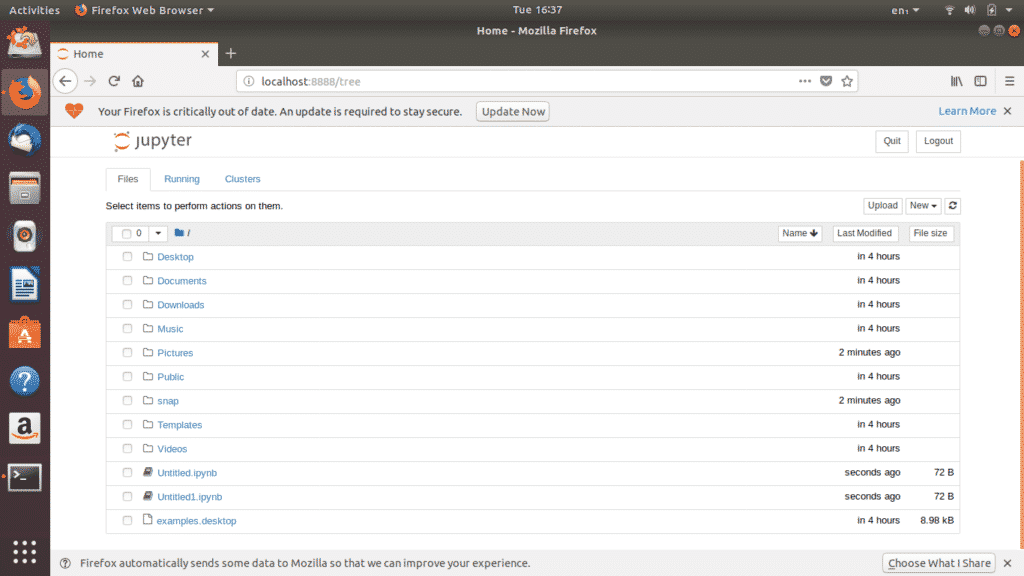

Prerequisites Python setup: Download and install the python setup from or you can run python in browser with jupyter notebook.īeautiful soup is one of the most widely-used Python libraries for web scraping. This guide will explain the process of making web requests in python using Requests package and its various features.To perform web scraping, you should also impo. To easily display the plots, make sure to include the line%matplotlib inline as shown below. If you don't have Jupyter Notebook installed, I recommend installing it using the Anaconda Python distribution which is available on the internet. Using Jupyter Notebook, you should start by importing the necessary modules (pandas, numpy, matplotlib.pyplot, seaborn).

The topic of following links I will describe in another blog post. How can we scrape a single website?In this case, we don’t want to follow any links.